Less flexibility in supporting different log structures or applications that write to log files instead of streams. The logs are available via the kubectl command for debugging, as the log files are available to kubelet which returns the content of the log file. Node-level logging is easier to implement because it hooks into the existing file based logging and is less resource intensive than a sidecar approach as there are less containers running per node. Now that we’ve gone over both the DaemonSet and sidecar approaches, let’s get acquainted with the pros and cons of each. Each pod contains a logging agent like Fluentd or Filebeat, which captures the logs from the application container and sends them directly to the storage backend, as illustrated in figure 3.įigure 3: Logging agent sidecar pattern Pros and Cons You can see this pattern in figure 2.Īnother approach is the logging agent sidecar, where the sidecar itself ships the logs to the storage backend. The streaming sidecar can also bring parity to the log structure by transforming the log messages to standard log format. In that case, you can use a streaming sidecar container to publish the logs from the file to its own stdout/stderr stream, which can then be picked up by Kubernetes itself. The streaming sidecar is used when you are running an application that writes the logs to a file instead of stdout/stderr streams, or one that writes the logs in a nonstandard format.

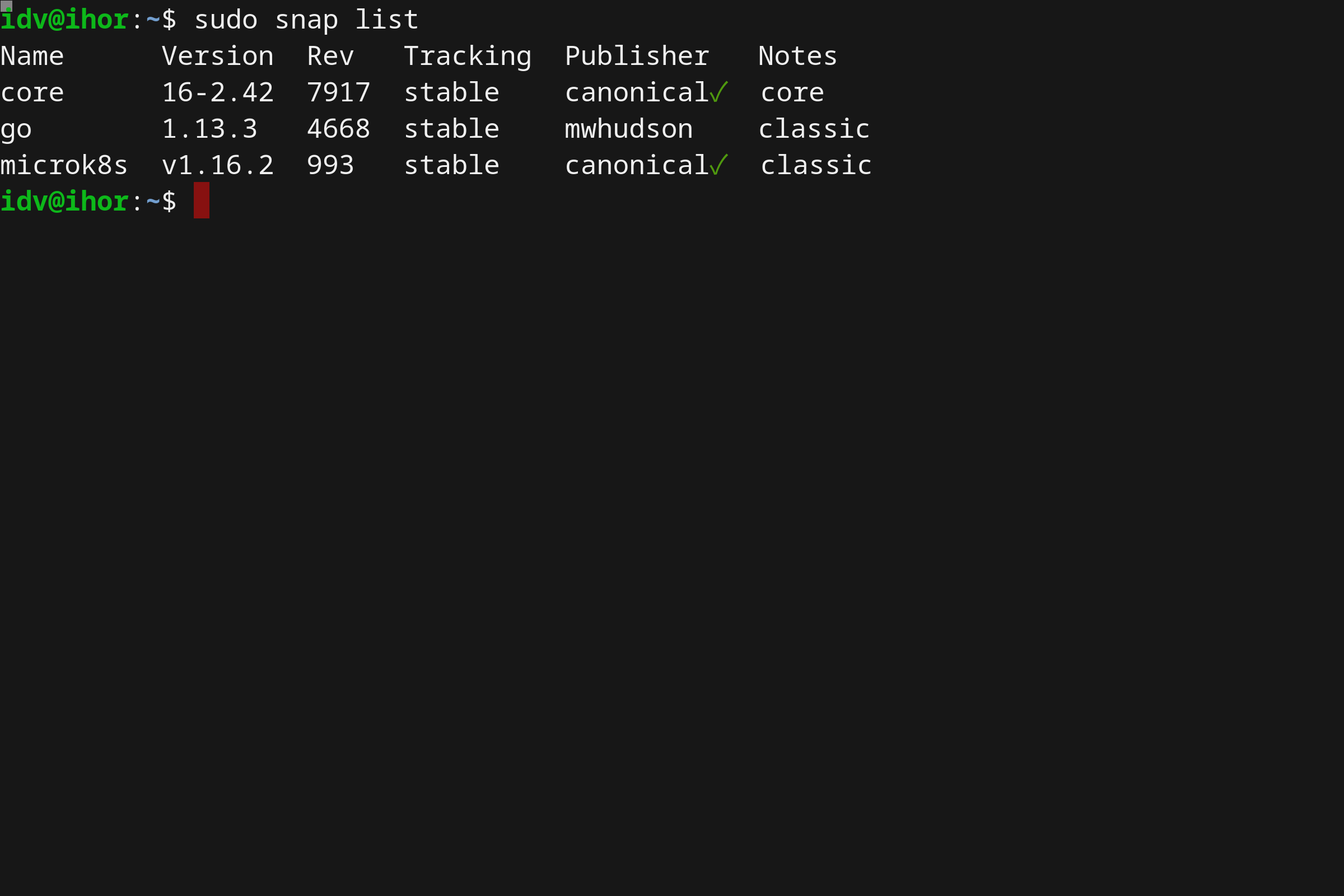

This sidecar can be of two types, streaming sidecar or logging agent sidecar. Sidecar patternĪlternatively, in the sidecar pattern a dedicated container runs along every application container in the same pod. You can see a diagram of this configuration in figure 1.įigure 1: A logging agent running per node via a DaemonSet 2. This logging agent is configured to read the logs from /var/logs directory and send them to the storage backend. Deploying a DaemonSet ensures that each node in the cluster has one pod with a logging agent running. In the DaemonSet pattern, logging agents are deployed as pods via the DaemonSet resource in Kubernetes. The two most prominent patterns for collecting logs are the sidecar pattern and the DaemonSet pattern. Now that we understand which components of your application and cluster generate logs and where they’re stored, let’s look at some common patterns to offload these logs to separate storage systems. If systemd is available on the machine the components write logs in journald, otherwise they write a. The other system components ( kubelet and container runtime itself) run as a native service. Some of the system components (namely kube-scheduler and kube-proxy) run as containers and follow the same logging principles as your application. The other source of logs are system components. These container logs can be fetched anytime with the following command: In a Kubernetes cluster, both of these streams are written to a JSON file on the cluster node. Your application runs as a container in the Kubernetes cluster and the container runtime takes care of fetching your application’s logs while Docker redirects those logs to the stdout and stderr streams. In a Kubernetes cluster there are two main log sources, your application and the system components. In this article we’ll discuss how to implement this approach in your own Kubernetes cluster. Storing logs off of the cluster in a storage backend is called cluster-level logging. These separate backends include systems like Elasticsearch, GCP’s Stackdriver, and AWS’ Cloudwatch. Logs are an effective way of debugging and monitoring your applications, and they need to be stored on a separate backend where they can be queried and analyzed in case of pod or node failures. As more companies run their services on containers and orchestrate deployments with Kubernetes, logs can no longer be stored on machines and implementing a log management strategy is of the utmost importance. This worked on long-lived machines, but machines in the cloud are ephemeral.

They never left the machine disk and the operations team would check each one for logs as needed. Historically, in monolithic architectures, logs were stored directly on bare metal or virtual machines.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed